AI Optimization Tools – Enhance AI Performance and Efficiency

In the rapidly evolving world of artificial intelligence, AI optimization tools have become essential for researchers, developers, and businesses looking to improve model performance, reduce computational costs, and streamline AI workflows. These tools are not just about speeding up training—they are about enabling AI systems to work smarter, more efficiently, and deliver better results.

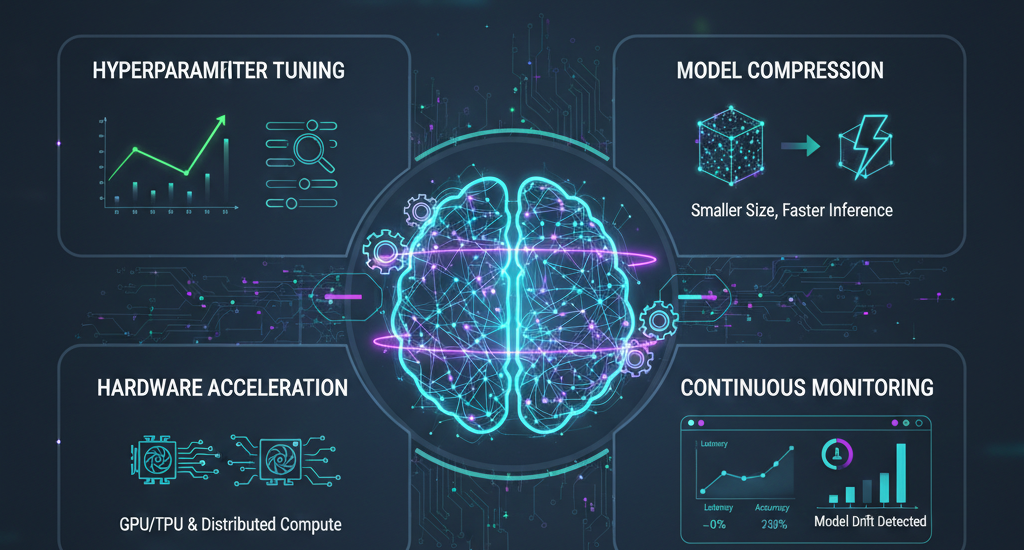

AI optimization tools encompass a variety of solutions, including hyperparameter tuning software, model compression platforms, AutoML solutions, and hardware acceleration frameworks. They help data scientists and engineers maximize accuracy while minimizing resource usage, making it possible to deploy advanced AI models even with limited infrastructure.

Why AI Optimization Tools Are Critical Today:

- Faster AI Training and Inference: Reduce the time it takes to train large-scale machine learning and deep learning models.

- Improved Model Accuracy: Optimize hyperparameters and streamline architectures to ensure models perform at their best.

- Cost Efficiency: Save on computational resources by pruning unnecessary model parameters and leveraging hardware acceleration.

- Simplified AI Workflows: Automate repetitive tasks like tuning, deployment, and performance monitoring.

Example: A financial services company implemented AI optimization tools to fine-tune predictive models for credit scoring. By using automated hyperparameter tuning and model compression techniques, they reduced processing time by 40% and improved prediction accuracy by 18%, allowing faster, data-driven decisions across the organization.http://aibygoogl.com

In this guide, we will explore everything you need to know about AI optimization tools: what they are, their types, how they work, popular platforms, use cases, challenges, and the future of AI optimization. Whether you are a researcher, developer, or business professional, this article provides comprehensive insights to help you choose the right optimization tools for your AI projects.

What Are AI Optimization Tools?

AI optimization tools are specialized software solutions designed to enhance the performance, efficiency, and scalability of artificial intelligence models. Unlike standard AI frameworks that primarily focus on building models, optimization tools are tailored to improve speed, accuracy, and resource utilization, allowing AI systems to deliver better results while using fewer computational resources.

These tools are crucial for both research and enterprise AI projects, as they address common challenges like slow model training, overfitting, high hardware costs, and inefficient deployment. By incorporating hyperparameter tuning, model compression, and performance monitoring, AI optimization tools enable teams to focus more on innovation rather than troubleshooting performance bottlenecks.

Key Features of AI Optimization Tools

The most effective AI optimization tools provide a range of functionalities to support the AI lifecycle:

- Hyperparameter Tuning Automation – Automatically tests combinations of parameters to identify the optimal configuration for a model.

- Model Pruning and Compression – Reduces model size by removing redundant neurons or layers, without significantly impacting accuracy.

- Hardware Acceleration – Leverages GPUs, TPUs, or distributed computing to speed up model training and inference.

- Performance Monitoring and Analytics – Tracks metrics like latency, memory usage, and accuracy to ensure continuous optimization.

- Integration with ML Frameworks – Works seamlessly with platforms like TensorFlow, PyTorch, and scikit-learn for smooth workflows.

Benefits of Using AI Optimization Tools

Adopting AI optimization tools can deliver significant advantages for organizations and researchers alike:

- Faster Model Development: Automated tuning and acceleration reduce model training time by up to 50% in many cases.

- Higher Accuracy and Reliability: Optimization ensures models are fine-tuned to deliver the best possible performance.

- Reduced Costs: Efficient models use less hardware and energy, lowering operational expenses.

- Scalability: Optimized models can handle larger datasets and more complex tasks without degrading performance.

- Simplified Workflow: Researchers spend less time manually adjusting models and more time on innovation.

Case Study Example:

A healthcare AI startup leveraged AI optimization tools to refine a neural network predicting patient readmission risk. Using hyperparameter tuning and model compression, they improved prediction accuracy by 15% while reducing computational costs by 30%, allowing faster and more reliable deployment in hospitals.

Types of AI Optimization Tools

AI optimization tools are diverse, catering to different needs in machine learning, deep learning, and AI deployment workflows. Understanding the types helps businesses and researchers select the right tools for their projects. The main categories include hyperparameter optimization tools, model compression platforms, AutoML solutions, hardware acceleration frameworks, and monitoring tools.

Hyperparameter Optimization Tools

Hyperparameters are critical settings that influence how a machine learning model learns and performs. Hyperparameter optimization tools automate the process of testing multiple parameter combinations to find the best-performing configuration.

Examples:

- Optuna – Flexible Python framework for automated hyperparameter search.

- Hyperopt – Popular tool using Bayesian optimization for tuning models.

- Ray Tune – Scalable solution for distributed hyperparameter optimization.

Benefits:

- Reduces trial-and-error experimentation

- Accelerates model development

- Improves model accuracy and reliability

Use Case: A fintech startup used Optuna to tune a credit scoring model. Automated hyperparameter optimization improved prediction accuracy by 12% compared to manual tuning.

Model Compression and Pruning Tools

Model compression tools reduce the size of AI models while maintaining performance. Pruning removes redundant neurons or layers, making models faster, more memory-efficient, and deployment-ready.

Examples:

- TensorRT – NVIDIA’s inference optimization tool for deep learning models.

- ONNX – Open standard for model interoperability and optimization.

- DeepSpeed – Optimizes large-scale transformer models for faster training and inference.

Benefits:

- Faster inference times

- Lower memory and computational requirements

- Easier deployment on edge devices or cloud environments

Use Case: An autonomous vehicle company used TensorRT to compress vision models, reducing inference latency by 40% without losing accuracy, enabling real-time navigation.

Automated Machine Learning (AutoML) Platforms

AutoML platforms automate key steps in the machine learning workflow, including model selection, hyperparameter tuning, and deployment. They are particularly useful for teams without deep AI expertise.

Examples:

- Google Cloud AutoML – User-friendly platform for training custom ML models.

- H2O.ai – Enterprise-ready AutoML with support for tabular and deep learning data.

- DataRobot – Comprehensive AutoML platform for predictive analytics and AI model deployment.

Benefits:

- Speeds up AI adoption for non-experts

- Reduces development effort and errors

- Supports rapid prototyping and production deployment

Use Case: A retail company implemented H2O.ai AutoML to forecast inventory demand. Automated optimization reduced forecasting errors by 20% and shortened the model-building cycle from weeks to days.

Hardware Acceleration and Parallelization Tools

Hardware optimization tools leverage GPUs, TPUs, and distributed computing to improve model training speed and handle large datasets efficiently.

Examples:

- CUDA – NVIDIA framework for GPU-accelerated computation.

- Horovod – Distributed training framework for TensorFlow and PyTorch.

- TensorRT – Also used for hardware-based inference acceleration.

Benefits:

- Shortens training time for large models

- Enables scaling to complex and large datasets

- Optimizes resource usage for cost efficiency

Use Case: A large NLP research team used Horovod for distributed training of transformer models, reducing training time from 10 days to 3 days on a multi-GPU setup.

Monitoring and Performance Analytics Tools

Monitoring and analytics tools track AI model performance, resource utilization, and efficiency, enabling continuous optimization in production environments.

Examples:

- MLflow – Tracks experiments, logs metrics, and manages model lifecycle.

- Weights & Biases – Provides visualization dashboards and performance tracking for ML models.

Benefits:

- Detects bottlenecks and performance degradation

- Ensures AI models maintain high efficiency in production

- Supports reproducibility and collaborative research

Use Case: A healthcare AI startup used Weights & Biases to monitor real-time model performance across multiple hospitals, quickly identifying underperforming models and retraining them for improved accuracy.

Summary Table: Types of AI Optimization Tools

| Type | Key Features | Example Tools | Benefits |

|---|---|---|---|

| Hyperparameter Optimization | Automated tuning, trial testing | Optuna, Hyperopt, Ray Tune | Improved accuracy, faster experimentation |

| Model Compression & Pruning | Pruning, quantization | TensorRT, ONNX, DeepSpeed | Faster inference, smaller model size |

| AutoML Platforms | Automated model selection & deployment | Google AutoML, H2O.ai, DataRobot | Rapid prototyping, accessible to non-experts |

| Hardware Acceleration | GPU/TPU support, distributed computing | CUDA, Horovod, TensorRT | Faster training, scalable workloads |

| Monitoring & Analytics | Performance tracking, metrics logging | MLflow, Weights & Biases | Continuous optimization, reproducibility |

How AI Optimization Tools Work

AI optimization tools are designed to streamline AI workflows, improve model efficiency, and maximize performance. Their operation revolves around a systematic approach that targets bottlenecks, reduces computational load, and enhances accuracy. Understanding how these tools function is essential for businesses, researchers, and developers who want to get the most out of their AI projects.

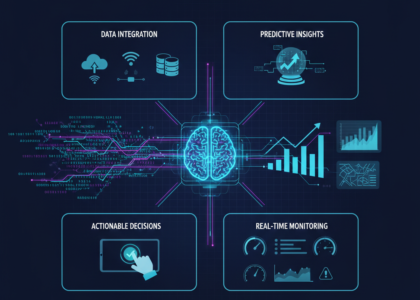

The workflow of AI optimization tools generally involves four core stages: data preparation, model optimization, hardware acceleration, and performance monitoring.

Data Preparation and Preprocessing

Before optimization begins, AI models require high-quality data. Many AI optimization tools provide integrated data preprocessing and management features:

- Data Cleaning: Remove inconsistencies, handle missing values, and normalize datasets for reliable training.

- Data Labeling & Annotation: Automate labeling for supervised learning tasks using prebuilt tools.

- Data Augmentation: Expand datasets with synthetic variations to improve model robustness.

Example: A computer vision project used AI optimization tools to automatically preprocess thousands of images for defect detection in manufacturing. This reduced manual preprocessing time by 50% while maintaining high data quality.

Model Optimization Techniques

AI optimization tools improve models using several techniques, depending on the type of model and task:

- Hyperparameter Tuning: Tools like Optuna or Hyperopt test combinations of parameters automatically, identifying the best configuration for the highest accuracy.

- Model Pruning: Unnecessary neurons or layers are removed to reduce size without sacrificing performance.

- Quantization: Converts models to lower-precision formats, reducing memory and speeding up inference.

- Knowledge Distillation: Transfers learning from large models to smaller, more efficient ones.

Benefit: These techniques reduce training time, improve model accuracy, and make models suitable for edge deployment or cloud-based applications.

Case Study: A healthcare AI platform applied model pruning and quantization to a diagnostic CNN model, reducing its size by 60% and inference time by 45% while maintaining the same accuracy for tumor detection.

Hardware Acceleration and Parallelization

Many AI optimization tools leverage specialized hardware like GPUs, TPUs, or multi-node clusters to accelerate computations:

- GPU/TPU Acceleration: Enables large-scale matrix computations and neural network training in hours rather than days.

- Distributed Training: Frameworks like Horovod or DeepSpeed allow models to train across multiple GPUs simultaneously.

- Memory Optimization: Efficient resource management reduces bottlenecks during model training and inference.

Example: A large NLP research team trained a transformer model across 8 GPUs using DeepSpeed. The training time dropped from 10 days to just 3 days, enabling faster iteration on research experiments.

Performance Monitoring and Continuous Optimization

Optimization doesn’t stop at model training. AI optimization tools also provide continuous monitoring to ensure models remain efficient and accurate over time:

- Metrics Tracking: Monitors latency, memory usage, throughput, and accuracy.

- Experiment Logging: Keeps track of all model versions, hyperparameters, and datasets for reproducibility.

- Alerts & Retraining: Detects performance drops or data drift and triggers retraining automatically.

Example: A retail company used Weights & Biases to monitor demand forecasting models. Real-time tracking allowed the team to quickly retrain underperforming models, reducing stock shortages by 15%.

Summary Table: Workflow of AI Optimization Tools

| Stage | Techniques & Tools | Benefits |

|---|---|---|

| Data Preparation | Cleaning, labeling, augmentation | High-quality data for reliable training |

| Model Optimization | Hyperparameter tuning, pruning, quantization, knowledge distillation | Faster training, smaller models, improved accuracy |

| Hardware Acceleration | GPU/TPU, distributed training, memory optimization | Reduced training time, scalable workloads |

| Monitoring & Continuous Optimization | Metrics tracking, experiment logging, retraining | Maintain efficiency, detect drift, reproducibility |

Popular AI Optimization Tools and Platforms

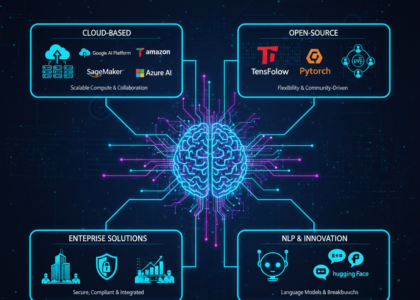

AI optimization tools come in a variety of forms, from open-source frameworks for researchers to enterprise-grade platforms for businesses. Choosing the right tool depends on your project goals, technical expertise, and computational resources. Below is a breakdown of the most widely used AI optimization tools and platforms, along with their features and real-world applications.

Optuna & Hyperopt – Hyperparameter Optimization

Optuna and Hyperopt are two of the most popular tools for automated hyperparameter tuning. They help data scientists test multiple parameter configurations efficiently, improving model performance without manual trial-and-error.

Key Features:

- Supports grid search, random search, and Bayesian optimization

- Easy integration with Python ML frameworks like TensorFlow, PyTorch, and scikit-learn

- Scalable for distributed computing environments

Use Case: A fintech startup used Optuna to tune a loan approval prediction model. Automated hyperparameter optimization improved prediction accuracy by 12%, reducing manual experimentation time by weeks.

TensorRT & ONNX – Model Compression and Deployment Optimization

TensorRT and ONNX are widely used for model optimization and deployment, particularly for deep learning models:

Key Features:

- Model pruning, quantization, and conversion for faster inference

- Optimized for GPUs and edge devices

- Interoperable with multiple ML frameworks

Use Case: An autonomous vehicle company used TensorRT to compress object detection models. This reduced inference latency by 40% while maintaining accuracy, enabling real-time navigation.

AutoML Platforms – Google Cloud AutoML, H2O.ai, DataRobot

AutoML platforms automate key steps in AI workflows, including model selection, hyperparameter tuning, and deployment. These platforms are especially useful for businesses with limited AI expertise.

Key Features:

- User-friendly interfaces for non-technical users

- Supports tabular, text, and image data

- End-to-end workflow: training, evaluation, and deployment

Use Case: A retail company used H2O.ai AutoML to forecast inventory demand. Automated model selection and tuning reduced forecasting errors by 20% and cut model development time from weeks to days.

DeepSpeed & Horovod – Hardware Acceleration and Distributed Training

For large-scale AI projects, hardware acceleration tools are essential to reduce training time and scale models efficiently.

Key Features:

- GPU/TPU acceleration

- Multi-node distributed training

- Memory optimization for large models

Use Case: A research lab used Horovod for distributed training of a transformer NLP model. Training time dropped from 10 days to 3 days, enabling faster iteration and experimentation.

MLflow & Weights & Biases – Monitoring and Analytics

Performance tracking and analytics tools allow continuous monitoring of AI models in production:

Key Features:

- Logs metrics such as latency, accuracy, and resource usage

- Tracks experiments, datasets, and model versions

- Visual dashboards for collaborative monitoring

Use Case: A healthcare startup used Weights & Biases to monitor real-time diagnostic models. Continuous tracking enabled immediate retraining of underperforming models, improving overall diagnostic accuracy by 15%.

Summary Table: Popular AI Optimization Tools

| Tool/Platform | Type | Key Features | Real-World Use Case |

|---|---|---|---|

| Optuna | Hyperparameter Optimization | Automated tuning, scalable, integrates with ML frameworks | Improved fintech credit scoring accuracy |

| Hyperopt | Hyperparameter Optimization | Bayesian optimization, flexible search | ML experimentation for NLP models |

| TensorRT | Model Compression & Deployment | Pruning, quantization, GPU acceleration | Autonomous vehicle object detection |

| ONNX | Model Interoperability & Optimization | Converts models across frameworks, inference optimization | Edge deployment for AI apps |

| H2O.ai | AutoML | Model selection, automated tuning | Retail inventory demand forecasting |

| Google AutoML | AutoML | End-to-end AI workflows, easy interface | Custom image and text classification |

| DataRobot | AutoML | Enterprise predictive analytics, deployment | Fraud detection and predictive modeling |

| DeepSpeed | Hardware Acceleration | Distributed training, memory optimization | Large-scale NLP transformer training |

| Horovod | Distributed Training | Multi-node GPU/TPU support | Scalable research experiments |

| MLflow | Monitoring & Analytics | Experiment logging, metrics tracking | Continuous model optimization |

| Weights & Biases | Monitoring & Analytics | Visualization dashboards, performance monitoring | Real-time monitoring for healthcare AI |

Benefits of Using AI Optimization Tools for Businesses and Researchers

Adopting AI optimization tools offers significant advantages for both researchers and organizations. These tools not only improve model performance but also streamline workflows, reduce costs, and accelerate innovation. Whether you are building AI models for enterprise applications, research projects, or commercial products, the benefits are substantial.

Faster Model Training and Inference

One of the most immediate benefits of AI optimization tools is reduced training and inference time. By leveraging hyperparameter tuning, model pruning, and hardware acceleration, these tools can cut training times by up to 50% or more.

Example: A logistics company used TensorRT and GPU optimization to train computer vision models for package sorting. Training time was reduced from several days to less than 24 hours, enabling faster deployment and real-time operation.

Improved Model Accuracy and Reliability

AI optimization tools help fine-tune models to maximize accuracy and reliability. Automated tuning of hyperparameters, along with monitoring and continuous optimization, ensures models perform consistently, even with evolving datasets.

Example: A healthcare AI startup applied hyperparameter optimization and monitoring tools to a predictive model for patient readmission. Accuracy improved by 15%, resulting in better clinical outcomes and decision-making.

Reduced Computational Costs

Optimized models are smaller, faster, and more efficient, which lowers the need for expensive hardware resources. Model pruning, quantization, and distributed computing enable organizations to use fewer GPUs/TPUs and still maintain high performance.

Example: A financial services firm adopted DeepSpeed for training large transformer models. By reducing memory usage and distributing workloads across GPUs, they cut infrastructure costs by 30%.

Scalability for Large Projects

AI optimization tools enable models to handle larger datasets and more complex tasks without performance degradation. This is particularly important for enterprise AI and research environments that require scalable solutions.

Example: A multinational retail chain used AutoML platforms to scale demand forecasting models across multiple regions. Optimized workflows allowed simultaneous deployment for thousands of stores, improving efficiency and planning accuracy.

Simplified AI Workflows

By automating tedious tasks such as hyperparameter tuning, performance tracking, and deployment, AI optimization tools simplify the workflow for data scientists and developers. Teams can focus more on innovation rather than repetitive trial-and-error processes.

Example: A small AI startup implemented H2O.ai AutoML. The platform automated model selection, hyperparameter tuning, and deployment, allowing their limited team to deliver enterprise-grade models faster than traditional manual approaches.

Summary Table: Key Benefits of AI Optimization Tools

| Benefit | Description | Example |

|---|---|---|

| Faster Training & Inference | Reduce time to train and deploy models | Logistics company reduced training from days to hours |

| Improved Accuracy | Fine-tuned models with higher reliability | Healthcare startup improved predictive accuracy by 15% |

| Reduced Costs | Efficient use of hardware and resources | Financial firm cut GPU costs by 30% |

| Scalability | Handle large datasets and complex tasks | Retail chain deployed forecasts for thousands of stores |

| Simplified Workflow | Automation reduces manual effort | Small AI startup delivered enterprise-grade models faster |